[…] we apperceive through our sieves as much as we sieve through our apperception. We appersieve, if you will. Or, if you go back to Kant ([1781] 1965), who defined the ego as the transcendental unity of apperception (whatever that means), we are our sieves.

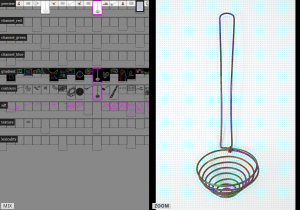

Indeed, crucially, sieves have to take on (and not just take in ) features of the substances they sieve, if only as “inverses” of them. A hole in the ground, for example, constitutes a simple sieve: anything with a diameter less than the hole will fall through; anything with a diameter larger than the hole will stay on top. In this way, to sieve a substance, the sieve must often have an (elective) affinity with the substance to be sieved and, in particular, the qualities sieved for—in this case size. In some sense, all sieves are inverses or even shadows of the substances they sort. By necessity, they exhibit a radical kind of intimacy

Note, then, that sieves — such as spam filters—have desires built into them (inso-far as they selectively permit certain things and prohibit others); and they have beliefs built into them (insofar as they exhibit ontological assumptions). And not only do sieves have beliefs and desires built into them (and thus, in some sense, embody values that are relatively derivative of their makers and users); they may also be said to have emergent beliefs and desires (and thus embody their own relatively originary values, however unconscious they and their makers and users are of them). In particular, the values of the variables are usually steps ahead of the consciousness of the programmers (and certainly of users)—and thus constitute a kind of prosthetic unconsciousness with incredibly rich and wily temporal dynamics. Note, then, that when we make algorithms and then set those algorithms loose, there is often no way to know what’s going to happen next (Bill Maurer, personal communication).

Paul Kockelman in The anthropology of an equation, Sieves, spam filters, agentive algorithms, and ontologies of transformation